Confused About n8n Pricing in 2025? Here’s How to Choose the Right Plan

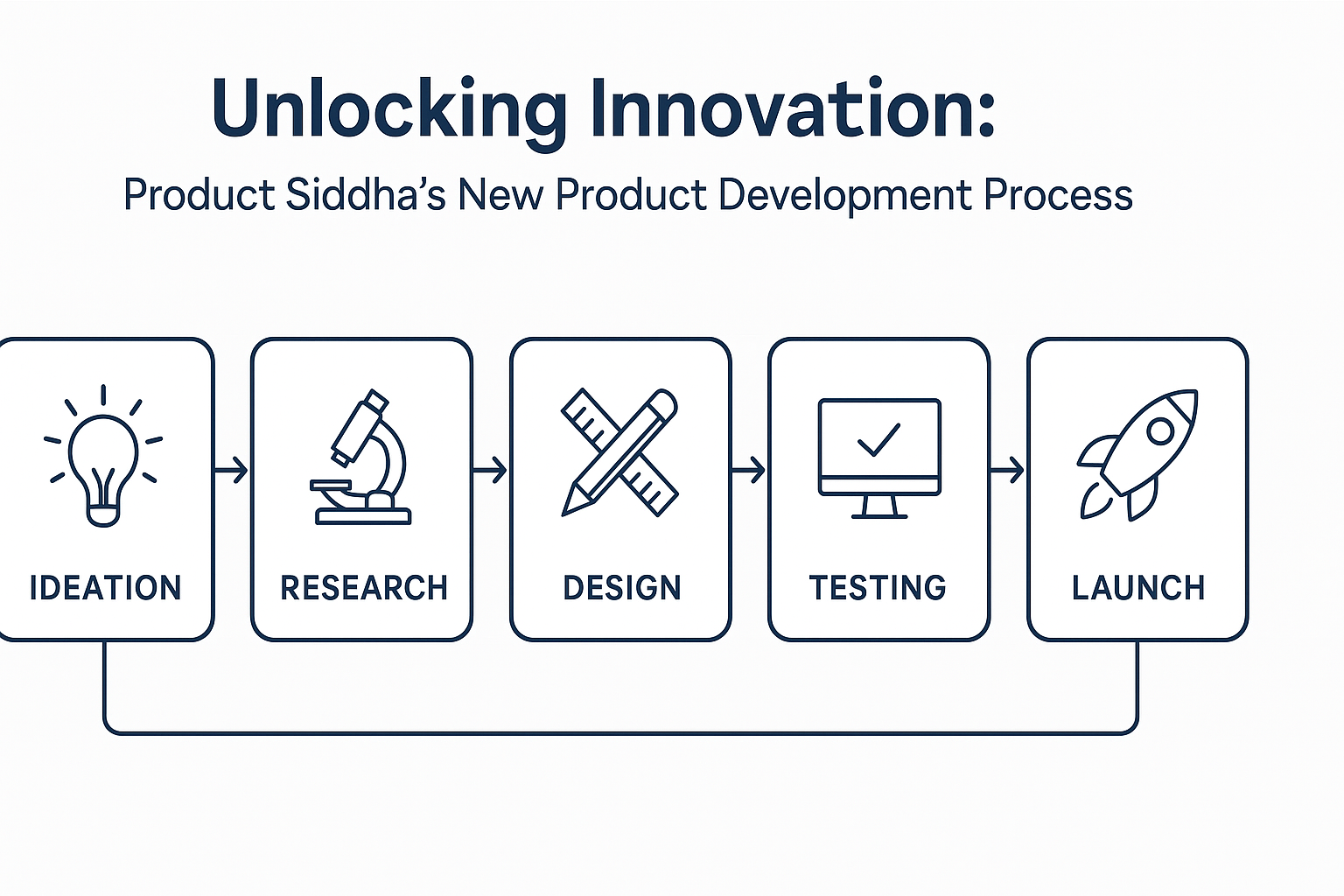

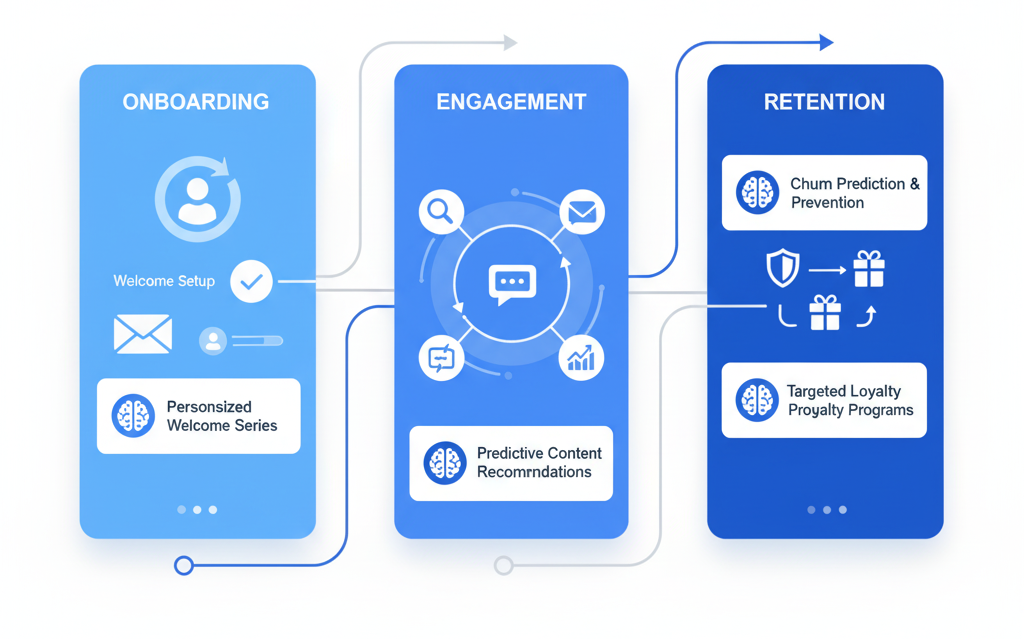

Confused About n8n Pricing in 2025? Here’s How to Choose the Right Plan Why n8n Pricing Confuses So Many Teams If you’ve explored automation tools lately, you’ve probably noticed that n8n’s pricing changed a lot. What started as a free, open-source workflow builder has grown into a full automation platform with paid cloud tiers, usage limits, and premium support. At Product Siddha, we help businesses compare automation platforms – and n8n is often one of the top choices. But many founders and teams get stuck trying to understand what they’re really paying for. So here’s a simple, startup-friendly breakdown of n8n’s 2025 pricing, hidden costs, and when each plan makes sense. 1. The n8n Plans at a Glance Plan Best For Cost (Monthly) Executions Users Hosting Support Community (Self-hosted) Tech-savvy teams $0 + server cost Unlimited Unlimited Self-managed Community forum Starter (Cloud) Solo users ~$20 2,500 1 n8n Cloud Basic Pro (Cloud) Small teams ~$50–$80/user 5,000+ Multi-user n8n Cloud Priority Enterprise Large orgs Custom (~$1,000+) Custom Unlimited Flexible Dedicated 2. Community Edition – Free, but Not Really “Free” The Community Edition is 100% open-source and free to use. You can install it on your own server and unlock all automation features without restrictions. Sounds great, right? But here’s the catch – you handle everything. What you get: Unlimited workflow executions Full control over your data Access to all community nodes and features What you manage yourself: Server setup and hosting Security patches and updates Backups and monitoring Troubleshooting through community support Hidden costs: Hosting can cost $50–$500 per month, depending on your setup. Add 10–15 hours of DevOps time monthly for maintenance, and the “free” version can easily cost more than a paid plan if you factor in team time. Best for: Developers, technical founders, and teams with in-house DevOps who want complete control and scalability. 3. Starter Plan – Cloud Hosting for Solo Users The Starter Plan is the easiest way to try n8n without worrying about infrastructure. It’s hosted on n8n’s cloud and designed for individuals or micro businesses. What you get: Hosted solution (no servers needed) 2,500 workflow executions/month 1 user account Basic security and community support What to watch for: Extra executions cost $10–$15 per 1,000 runs No team collaboration (single-user only) Limited support if things go wrong Best for: Freelancers, solo founders, or small businesses testing automation or running light workflows. Example: A solo marketer automating lead collection and email alerts can run comfortably within 2,000–2,500 executions per month. Once workflows grow, they’ll likely need to upgrade to Pro. 4. Pro Plan – For Growing Teams The Pro Plan is where most growing teams land. It balances cost, capacity, and collaboration tools, perfect for startups scaling their operations. What you get: 5,000 executions/month (expandable) Multiple user access Role-based permissions Workflow sharing and version control Priority email support Why it’s worth it: When automation becomes part of your daily operations – syncing CRMs, triggering emails, generating reports, you’ll need multiple users and faster support. The Pro Plan scales with your team and keeps automation reliable. Estimated cost: $50–$80 per user per month, depending on usage. Best for: Startups and teams that rely on automation daily and need collaboration + stability. 5. Enterprise Plan – For Large, Regulated Organizations If you’re a larger business or need guaranteed uptime and compliance, the Enterprise Plan is your match. Key features: Custom executions and user count Dedicated support and SLAs Advanced security controls Option for dedicated hosting Training and onboarding for big teams Pricing: Starts around $1,000/month but varies based on setup. Best for: Corporates, fintech, and healthcare companies with complex automation needs and strict data regulations. 6. Understanding the Real Costs Even though n8n’s listed pricing looks simple, total cost depends on how you use it. Let’s break down hidden costs and key variables that affect your decision. a. Execution Overages Every cloud plan has a workflow execution cap. When you exceed it, overage fees kick in, and they can pile up fast. Pro Tip: Monitor execution usage weekly. Optimize workflows by combining triggers, batching tasks, or reducing unnecessary steps. Product Siddha has seen teams cut execution costs by 20–40% just through better workflow design. b. Infrastructure and Maintenance If you self-host n8n (Community Edition), you’re responsible for: Server hosting ($50–$500/month) Security patches and monitoring Backup management DevOps hours for updates For non-technical teams, these hidden costs often outweigh the savings of going self-hosted. c. Integration & API Costs Many workflows rely on third-party APIs (like HubSpot, Airtable, or Slack). Some APIs have premium pricing or request limits – adding to your total automation cost. Always check if your workflow uses any paid connectors or API subscriptions before committing. 7. How to Choose the Right Plan (Step-by-Step) Let’s make this simple. Here’s a step-by-step checklist Product Siddha uses with clients when selecting the right n8n plan: Check your technical ability If you have a DevOps or tech team → Community Edition If not → Cloud (Starter or Pro) Estimate your execution volume Light usage (<2,500) → Starter Moderate (5,000–10,000) → Pro Heavy or custom (>20,000) → Enterprise or self-hosted Evaluate team access Solo user → Starter Team collaboration → Pro or higher Consider data privacy Regulated or sensitive data → Community (self-hosted) No strict requirements → Cloud Plan for growth Expect workflow volume to double in 6–12 months. Start with the lowest plan that can handle future growth without constant upgrades. 8. Real-World Examples Startup Example: SaaS Founders A small SaaS team uses n8n to automate customer onboarding and billing. They started on the Starter Plan to test automation, but quickly hit limits as users grew. Upgrading to Pro gave them more executions, multi-user access, and better reliability. Agency Example: Marketing Team A five-person digital agency automates client reports and campaign updates. They needed collaboration, version control, and reliable support, so they chose Pro Plan for around $240/month (3 users). The automation saves 15+ hours per week, easily covering the plan’s cost. Tech Example: Self-Hosting A tech startup