Product Discovery in the Age of AI: New Playbooks for PMs

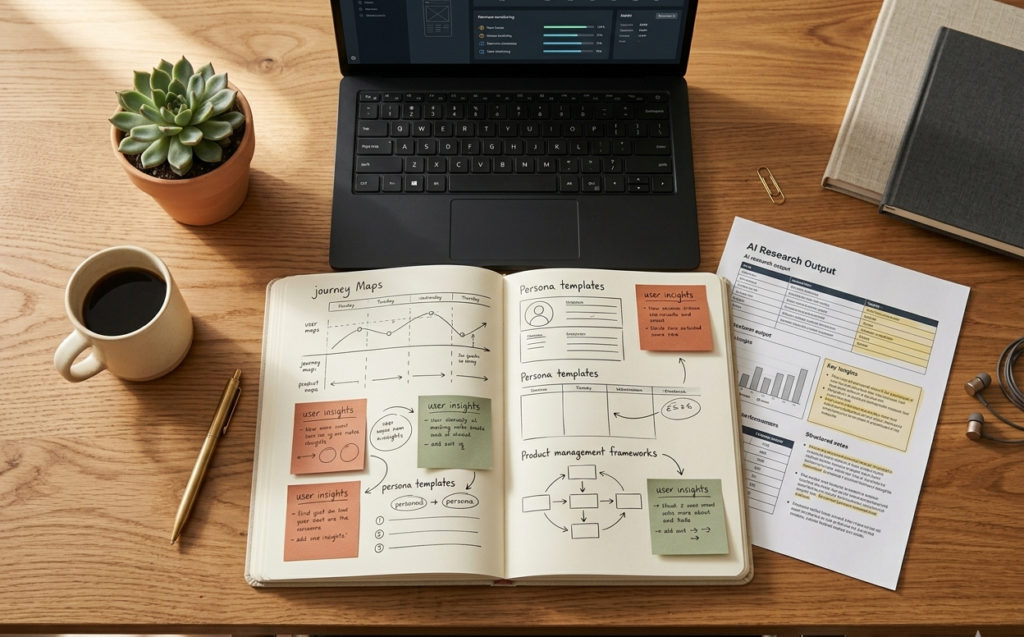

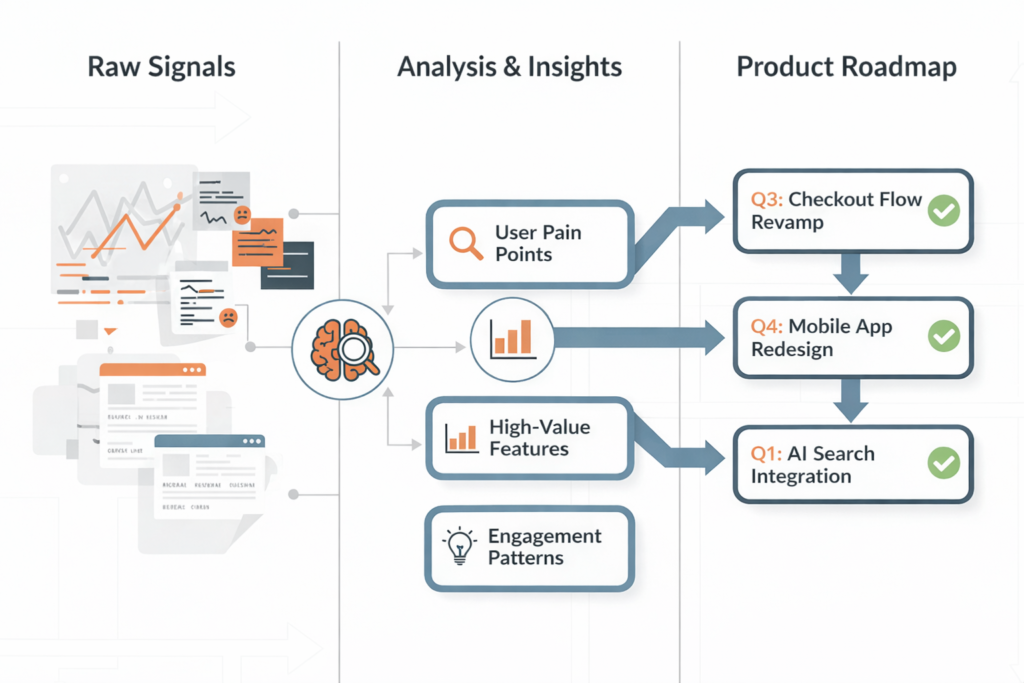

Product Discovery in the Age of AI: New Playbooks for PMs A Shift in How Products Begin Product discovery has always been about understanding users before building solutions. That principle has not changed. What has changed is the speed and depth at which insights can be gathered. In earlier years, discovery relied heavily on interviews, surveys, and intuition. Today, AI-assisted tools allow product teams to observe behavior, test ideas, and refine direction in a much shorter time. Yet faster access to data has introduced a new challenge. Teams now face more signals than they can interpret. For teams working with Product Siddha, product discovery is treated as a structured discipline. AI is used as support, not as a replacement for judgment. What Product Discovery Means in 2026 Product discovery is the process of identifying the right problem and validating the right solution before full development begins. A sound discovery process answers three questions: Who is the user What problem do they face Why does the problem matter enough to solve AI helps gather evidence for these questions, but it does not decide the answers. The Role of AI in Discovery Work AI has introduced new ways to study users and markets. It processes large data sets quickly and highlights patterns that might otherwise go unnoticed. Key Applications Area Traditional Method AI-Assisted Method User Research Interviews and surveys Behavioral data analysis and clustering Market Signals Manual tracking Automated trend detection Feedback Analysis Reading responses Sentiment and intent analysis Experimentation Limited testing Rapid prototype testing These capabilities allow product managers to test ideas earlier and refine them with evidence. AI-Driven Product Discovery Flow User Signals → Pattern Identification → Hypothesis → Rapid Testing → Insight → Iteration This cycle reflects continuous learning. Discovery is not a single phase. It runs alongside development. A Practical Example: Networking Assistant A useful case comes from “Building the World’s First AI-Powered Networking Assistant.” The product aimed to connect users based on shared interests and context. At the discovery stage, the problem was not clearly defined. Users expressed a general need to network better, but their expectations varied. AI-assisted analysis of user interactions helped identify patterns. Users valued timely and relevant introductions rather than broad recommendations. This insight shaped the product direction. Instead of building a large platform, the team focused on a single feature. Context-based matching. Early prototypes tested this feature with a small group. Feedback confirmed its value. This example shows how AI can guide discovery without replacing human judgment. New Playbooks for Product Managers Product managers must adapt their approach to make effective use of AI. 1. Start with Real Signals Rely on actual user behavior, not assumptions. AI tools can highlight patterns, but these must be interpreted carefully. 2. Form Clear Hypotheses Every idea should be treated as a hypothesis. Define what success looks like before testing. 3. Test Early and Often Rapid prototyping allows teams to validate ideas quickly. This reduces wasted effort. 4. Combine Data with Context Numbers alone do not explain user intent. Combine quantitative data with qualitative insights. Decision Quality vs Data Volume Data Volume Decision Quality Low Limited insight Moderate Balanced understanding High without context Confusion High with structure Strong decisions More data does not guarantee better decisions. Structure is essential. Avoiding Common Pitfalls Despite better tools, product discovery can still fail. Over-Reliance on AI Some teams treat AI outputs as final answers. This leads to shallow conclusions. Ignoring Edge Cases Patterns often reflect majority behavior. Unique user needs may be overlooked. Skipping Problem Validation Teams may move directly to solutions without confirming the problem. Fragmented Insights Data from different sources may not align, leading to inconsistent conclusions. Balancing Speed and Thought AI allows teams to move faster, but speed must be managed carefully. Quick decisions without reflection can lead to poor outcomes. Comparison Approach Speed Depth Result Fast without analysis High Low Weak validation Slow traditional method Low High Delayed progress Balanced AI-assisted method Medium High Strong outcomes The goal is to maintain depth while improving speed. Integrating Discovery with Development Discovery should not be isolated from development. Insights must flow into product decisions continuously. In “Product Management for UAE’s First Lifestyle Services Marketplace,” early discovery revealed diverse user needs. Instead of building separate solutions, the team identified common patterns. This allowed the creation of a unified service layer. Development proceeded with clarity, reducing rework and confusion. The Road Ahead Product discovery in the age of AI offers new opportunities. Teams can learn faster, test ideas earlier, and reduce uncertainty. Yet these advantages require careful use. AI should support thinking, not replace it. Data should guide decisions, not overwhelm them. Structure should remain at the core of discovery. Product managers who adapt to this approach will build products that meet real needs. Those who rely only on tools may struggle to find direction. In the end, product discovery remains a human process. AI simply makes it more informed and more efficient.