How to Automate 80% of Your B2B Lead Enrichment Using Custom AI Workflows

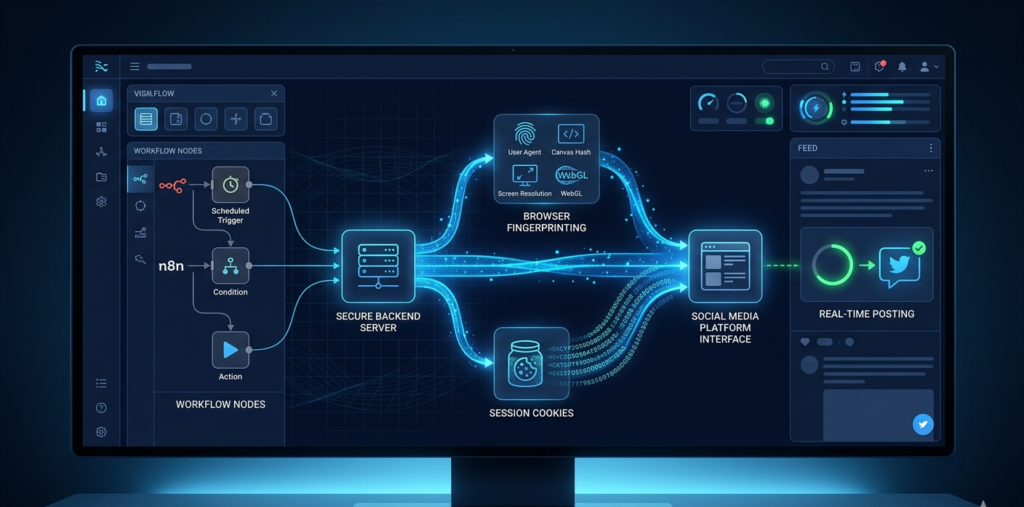

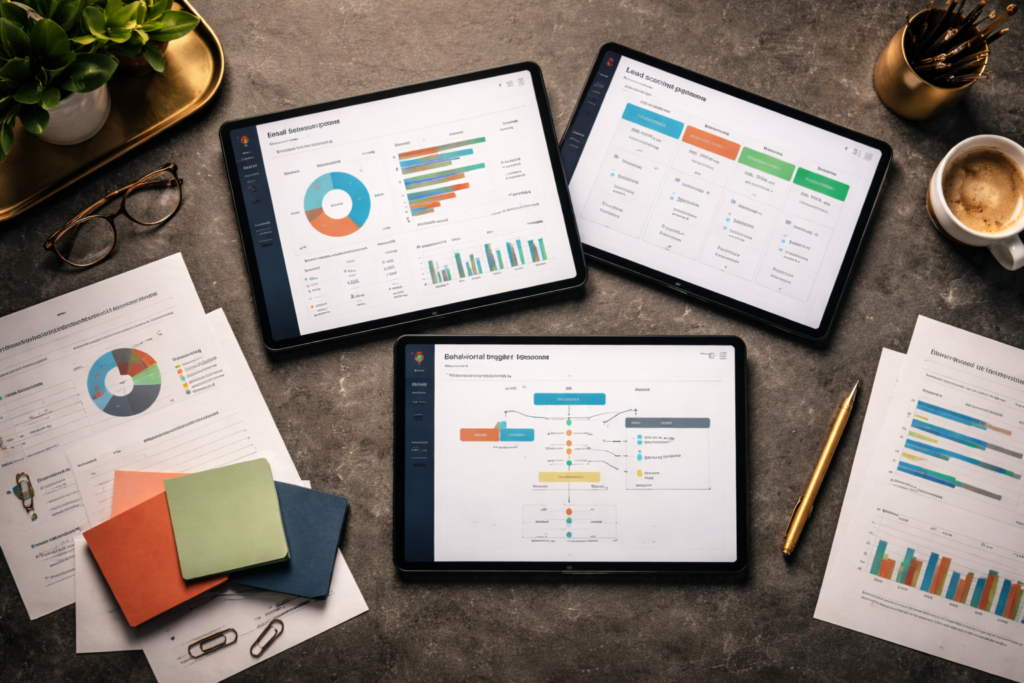

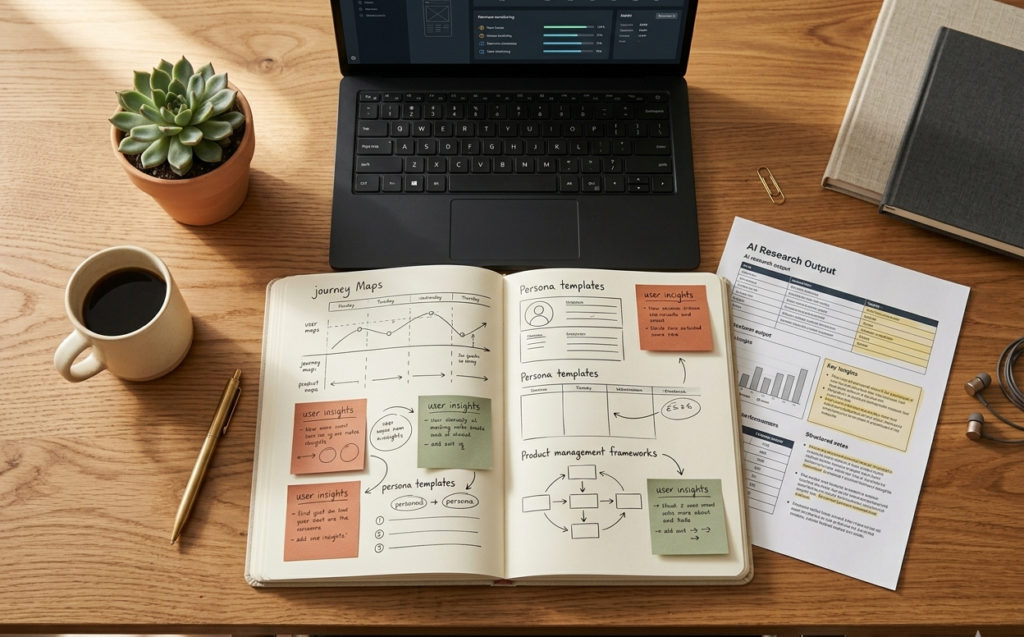

How to Automate 80% of Your B2B Lead Enrichment Using Custom AI Workflows The Lead Problem Most B2B teams today don’t struggle with lead generation – they struggle with lead understanding. You capture leads from: Website forms Ads LinkedIn Events But then the real questions begin: Who is this person? Is this company relevant? Is this worth a sales call? This is where lead enrichment should help – but manually, it becomes: Slow Inconsistent Outdated by the time it’s done At Product Siddha, we solve this by automating enrichment using AI workflows + modern data tools. What Lead Enrichment Really Means Today Modern enrichment is not just adding a job title. A high-quality enriched lead includes: Company size, revenue, industry Decision-maker role & seniority Tech stack (important for SaaS & B2B) Buying intent signals LinkedIn and digital presence Geographic and operational data This data doesn’t come from one place – it comes from multiple tools stitched together intelligently. How Product Siddha Automates Lead Enrichment (Real Stack) Here’s the actual system we build for clients 1. Data Orchestration with Clay (Core Engine) We use Clay as the central enrichment layer. With Clay, we: Pull lead data from forms, CRM, or spreadsheets Enrich using 50+ data providers Run AI-based lookups and transformations Clay acts as the brain of enrichment workflows. 2. Data Sources & Enrichment Tools We Use We don’t rely on one tool – we combine multiple sources for accuracy. Primary Enrichment Tools Clearbit → Company data, employee size, domain insights Apollo.io → Contact data, job roles, emails ZoomInfo → Deep B2B company intelligence Hunter.io → Email verification Snov.io → Contact enrichment + outreach signals 3. Intent & Signal Tools To understand buying readiness: 6sense → Buyer intent tracking Bombora → Topic-level intent signals RB2B (Reveal B2B) → Identify anonymous website visitors 4. AI Processing Layer We apply AI to: Classify industries Score leads Clean messy data Summarize company profiles Tools + Models: OpenAI / LLM APIs Clay AI columns Custom scoring logic 5. Automation & Workflow Tools To connect everything: Zapier → Simple automation flows Make (Integromat) → Advanced multi-step workflows n8n → Custom, self-hosted automation 6. CRM & Activation Layer Final enriched data is pushed into: HubSpot Salesforce Pipedrive With automatic triggers like: Assign sales reps Trigger email sequences Notify high-intent leads End-to-End Workflow (How It Actually Runs) Lead Capture → Clay Enrichment → Data Tools (Clearbit, Apollo, etc.) → AI Processing → Intent Scoring → CRM Update → Sales Action The 80% Automation Rule (Explained Practically) What We Fully Automate Company lookup (Clearbit, ZoomInfo) Contact enrichment (Apollo, Snov) Email verification (Hunter) Industry classification (AI) Lead scoring (custom logic) Data cleanup & formatting What Still Needs Humans Strategic account targeting Enterprise deal qualification Relationship context Why This Works (Compared to Manual Process) Factor Manual Enrichment Product Siddha System Speed Slow Real-time Accuracy Depends on person Multi-source verified Scalability Limited High Consistency Low Structured Actionability Delayed Instant Real Impact for B2B Teams When we implement this system: 1. Faster Lead Response Leads are enriched within seconds, not hours 2. Better Lead Qualification Sales teams only talk to high-quality leads 3. Reduced Manual Work Up to 80% of enrichment effort removed 4. Higher Conversions Because: Messaging is personalized Timing is faster Context is clearer How Product Siddha Builds This for You We don’t just suggest tools – we build the full system. Our Approach: Step 1: Understand Your Sales Process What data actually matters for conversion Step 2: Design Enrichment Logic Custom rules for scoring, classification, routing Step 3: Setup Clay + Data Stack Connect all enrichment tools Step 4: Build Automation Workflows Using Zapier / Make / n8n Step 5: CRM Integration Ensure seamless data flow Step 6: Continuous Optimization Improve accuracy using real data Common Mistakes We Help Avoid Using only one enrichment tool (low accuracy) Enriching unnecessary data fields No validation layer No connection to CRM actions Static workflows that don’t evolve Measuring Success We track outcomes, not just activity: Lead data accuracy Time to first response Sales conversion rates Reduction in manual effort Closing Insight Lead enrichment is no longer a manual task – it’s a system design problem. With the right combination of: Clay (central engine) Multiple data providers (Clearbit, Apollo, ZoomInfo, etc.) AI processing Automation workflows You can automate 80% of enrichment reliably. At Product Siddha, we build these systems end-to-end so your team doesn’t just collect leads, but understands and converts them faster. Because in B2B: Better data → Better conversations → Better revenue