Email Automation Tools Compared: Klaviyo vs HubSpot vs Customer.io

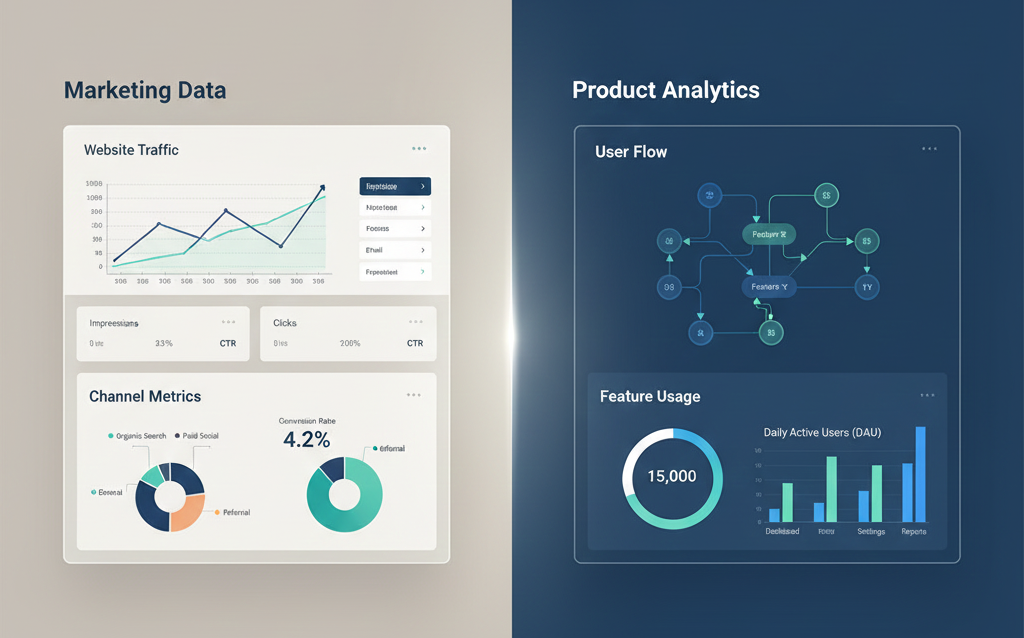

Email Automation Tools Compared: Klaviyo vs HubSpot vs Customer.io Choosing the Right System Email remains one of the most reliable communication channels for businesses. Yet the way it is managed has changed. Simple newsletters have given way to structured automation, where messages respond to user behavior and timing. Selecting the right platform is not a technical decision alone. It affects how teams manage data, how campaigns are executed, and how revenue is tracked. Many companies struggle because they choose tools without understanding their operational fit. Teams working with Product Siddha often face this question early. Which platform aligns with their business model and scale? What Email Automation Means Today Email automation now involves more than scheduled campaigns. It includes: Behavior-based triggers Lifecycle communication Personalization based on data Integration with CRM and analytics systems A strong platform should support these functions without adding unnecessary complexity. Platform Overview Klaviyo Klaviyo is widely used in e-commerce. It focuses on customer data, segmentation, and revenue tracking. HubSpot HubSpot offers a broader system. It combines CRM, email automation, and sales tools in one platform. Customer.io Customer.io is designed for product-led teams. It allows flexible event-based messaging across email and other channels. Feature Comparison Feature Klaviyo HubSpot Customer.io Core Strength E-commerce automation All-in-one CRM Event-driven messaging Ease of Use Moderate High Moderate Data Handling Strong segmentation Centralized CRM Flexible event tracking Integration Shopify and e-commerce tools Wide ecosystem Developer-friendly APIs Pricing Model Based on contacts Tiered plans Based on usage Each platform serves a different type of organization. Klaviyo in Practice Klaviyo is best suited for businesses that rely on repeat purchases. It excels in tracking customer behavior and triggering messages accordingly. In “Boosting Email Revenue with Klaviyo for a Shopify Brand,” the system focused on abandoned cart recovery and post-purchase engagement. Automated flows were built around user actions. This approach improved repeat purchases and increased overall revenue. The key advantage was direct integration with e-commerce data. However, Klaviyo may feel limited for companies that require deeper CRM functionality. HubSpot in Practice HubSpot provides a unified system for marketing and sales. It is useful for companies that need a central platform for customer data. In “HubSpot Marketing Hub Setup for a Growing Fintech Brand,” the focus was on aligning marketing efforts with sales processes. Email automation was connected to lead scoring and CRM updates. This created a consistent view of each customer. Teams could track interactions across multiple touchpoints. HubSpot works well for organizations that prefer a single system rather than multiple tools. Customer.io in Practice Customer.io is designed for flexibility. It allows teams to trigger messages based on specific user actions within a product. This makes it suitable for SaaS and product-led companies. Messages can be tied to in-app behavior, not just external actions. For example, onboarding sequences can adapt based on how users interact with a product. This level of control is useful but requires technical setup. Platform Fit by Business Type Business Type Recommended Platform E-commerce Klaviyo B2B and Fintech HubSpot SaaS and Product-Led Customer.io Choosing the right tool depends on how the business operates. Key Differences That Matter Data Structure Klaviyo focuses on customer profiles. HubSpot centralizes all customer data. Customer.io relies on event tracking. Flexibility Customer.io offers the most flexibility but requires technical knowledge. HubSpot provides structure with ease of use. Klaviyo balances usability with strong e-commerce features. Integration Depth HubSpot integrates deeply across functions. Klaviyo integrates well with commerce platforms. Customer.io integrates through APIs. Data and Email Performance In “Product Analytics & Full-Funnel Attribution for a SaaS Coaching Platform,” email performance improved only after data was structured correctly. Campaigns were aligned with user behavior. This highlights an important point. The platform alone does not determine success. Data quality and workflow design are equally important. Strengths and Limitations Platform Strength Limitation Klaviyo Strong revenue tracking Limited CRM features HubSpot Unified system Higher cost at scale Customer.io Flexible automation Requires technical setup Understanding these trade-offs helps in making a better decision. A Balanced Decision Email automation tools are not interchangeable. Each platform serves a distinct purpose. The right choice depends on business model, team structure, and data requirements. Klaviyo suits e-commerce businesses that rely on customer behavior. HubSpot works well for organizations that need a unified system. Customer.io fits teams that require flexible, event-driven communication. For companies working with Product Siddha, the focus remains on alignment. The tool must match the system, not the other way around. In the long run, success in email automation depends on structure, clarity, and consistent execution. The platform supports the process, but it does not define it.