MVP Development in 2026: Faster, Cheaper, and AI-Assisted

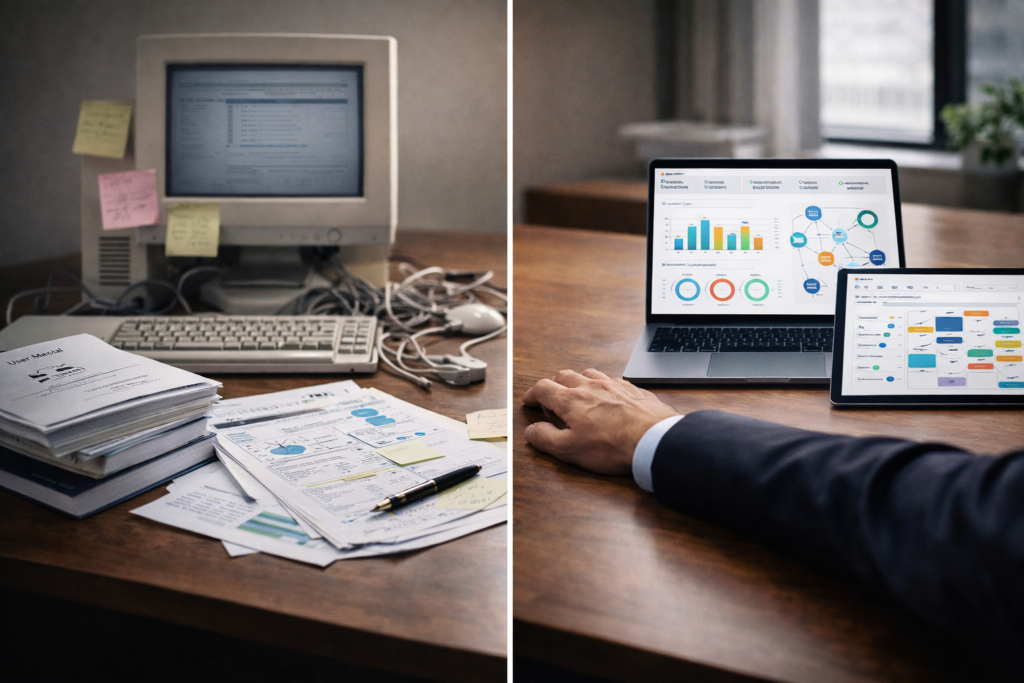

MVP Development in 2026: Faster, Cheaper, and AI-Assisted A Different Starting Point MVP development no longer begins with a full engineering plan. In 2026, it often starts with a working prototype built in days, not months. Founders and product teams now test ideas earlier, with fewer resources, and with clearer feedback loops. This shift has come from two changes. First, tools have become more accessible. Second, AI-assisted workflows now support research, design, and development. Yet speed alone does not guarantee success. Many fast-built products fail because they lack direction. For teams working with Product Siddha, MVP development is treated as a structured process. The goal is not speed alone. It is useful validation. What MVP Development Means Today MVP development in 2026 focuses on one question. Does the product solve a real problem for a specific user group? This definition is simple, but its execution requires discipline. A modern MVP includes: A narrow feature set tied to a clear use case Measurable outcomes such as engagement or conversion A feedback mechanism built into the product The process has changed, but the principle remains the same. Build only what is needed to learn. How AI Has Changed MVP Development AI has reduced the effort required at each stage. It does not replace thinking. It reduces repetitive work and speeds up iteration. Key Areas of Impact Stage Traditional Approach AI-Assisted Approach Research Manual interviews and surveys AI-assisted data analysis and insights Design Static wireframes Interactive prototypes generated quickly Development Full coding cycles Partial automation and code generation Testing Manual QA cycles Automated testing and feedback loops These changes allow teams to move from idea to working product much faster. However, speed must be balanced with clarity. MVP Development Flow in 2026 Idea → Problem Validation → Rapid Prototype → User Testing → Iteration → MVP Launch This flow shows a continuous cycle. MVP development is not a one-time event. It is an evolving process. The Cost Advantage of Modern MVPs Cost has always been a concern in MVP development. In earlier years, even a basic product required a full team. Today, smaller teams can achieve similar outcomes. Cost Comparison Component Earlier Approach 2026 Approach Design Dedicated design team AI-assisted design tools Development Full-stack engineers Hybrid AI and developer model Testing Separate QA team Automated testing systems Time 3 to 6 months 2 to 6 weeks Lower cost does not mean lower quality. It means fewer unnecessary steps. Avoiding Common Mistakes Even with better tools, teams still make avoidable errors in MVP development. 1. Building Too Much Many teams add features before validating the core idea. This increases cost and delays learning. 2. Ignoring User Feedback An MVP without feedback is incomplete. Data must guide decisions. 3. Over-Reliance on Tools AI tools assist the process, but they do not define the product. Clear thinking is still required. 4. Weak Problem Definition If the problem is unclear, the product will lack direction. Speed vs Clarity in MVP Development Approach Speed Clarity Outcome Fast without validation High Low Failure risk Slow with structure Low High Delayed learning Balanced approach Medium High Strong validation The goal is balance. Speed should support clarity, not replace it. Role of Product Siddha in MVP Development Structured MVP development often requires guidance. This is where Product Siddha contributes. In the case “Product Management for UAE’s First Lifestyle Services Marketplace,” the challenge was to define a product that could serve multiple user needs. Instead of building a large system, the team identified a core service layer. An MVP was developed around this layer. User interactions were tracked carefully. Insights from early users shaped the next phase of development. This approach reduced risk and ensured that resources were used effectively. Building with Limited Resources Many founders assume that MVP development requires significant investment. In reality, constraints can improve focus. A small team with clear goals often performs better than a large team with unclear direction. The key is prioritization. Practical Steps Define one primary use case Limit features to essential functions Track user behavior from day one Iterate based on real data These steps apply across industries. Measuring MVP Success An MVP should produce measurable results. These results depend on the product type, but common metrics include: User engagement Conversion rates Retention over a short period Feedback quality In “Product Analytics for a Ride-Hailing App with Mixpanel,” tracking user behavior revealed gaps in the onboarding process. Adjustments were made quickly. This improved user retention without major development changes. Measurement allows teams to improve without guesswork. When to Move Beyond the MVP An MVP is not meant to last forever. It serves a purpose. Once validation is achieved, the product must evolve. Signs that it is time to move forward include: Consistent user engagement Clear demand for additional features Stable core functionality At this stage, development can expand with confidence. A Steady Path Ahead MVP development in 2026 is faster and more accessible. AI assistance reduces effort and shortens timelines. Yet the fundamentals remain unchanged. A clear problem, a focused solution, and measurable outcomes define a successful MVP. Tools can support this process, but they cannot replace it. Teams that combine speed with discipline will build products that last. Those that focus only on speed may struggle to find direction. In the end, MVP development is not about launching quickly. It is about learning quickly and building with purpose.